When you think of running a diary study, we guess that Confluence isn’t the first research tool that comes to mind. Confluence is best known as a tool for knowledge management and team collaboration and not a platform to host a diary study, but with limitation comes creativity. In an effort to overcome the limitations in our research process we discovered an innovative and sustainable means to interact with our user population. From adapting Confluence into a longitudinal research tool to removing research tools and touchpoints, we’ve redesigned our research process to support ongoing contact with our user population. Through our journey, we’ve found three key learnings that have removed friction in the participation experience and improved the quality of our work.

- Design with your participant’s experience in mind, not just your preferred methodology.

- Use your knowledge of your population’s existing workflows to identify data collection opportunities. Augment those existing touchpoints or tools, rather than introducing new tools or ‘more work’.

- Avoiding adding more ‘noise’ and notifications to their daily life by distributing (or even conducting) surveys through unexpected modalities like Slack.

But first, let’s establish how we got there:

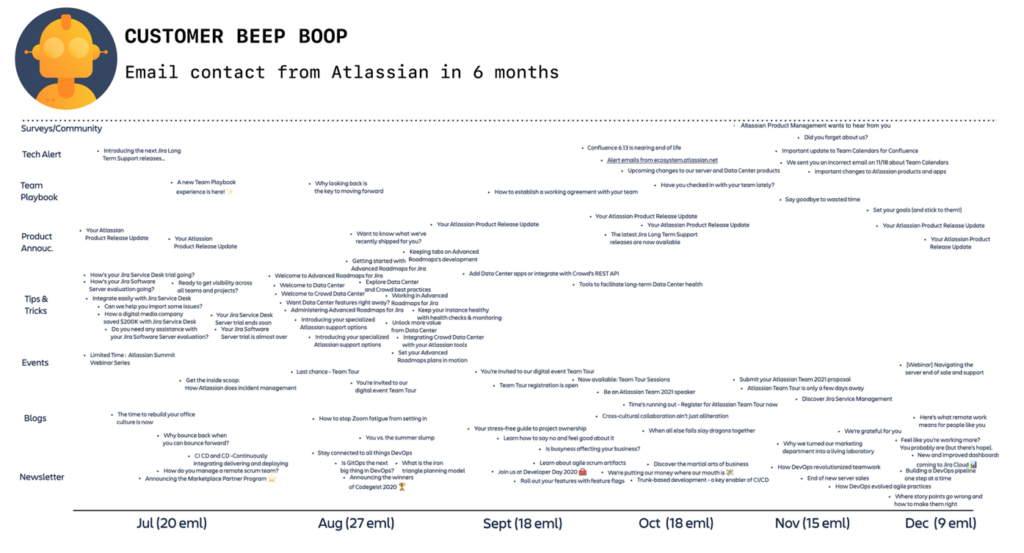

Our challenge was that the well-oiled Atlassian research machine was optimised for exploring customer needs, but the Partner community who make up part of our ecosystem were struggling under the burden of frequent, overwhelming contact. Our partners represent a small community of talented people who build apps and integrations for the Atlassian Marketplace. We’re fortunate that they are a super-engaged, specialised group of people eager to give feedback. However, there are only about 500 of these organisations – a stark contrast to the millions of users for Jira or Confluence. As researchers, we naturally approached our research questions selecting the best tools or methods for the topic at hand. Unfortunately, some of our go-to research methods were leading this small population to feel overwhelmed and underappreciated.

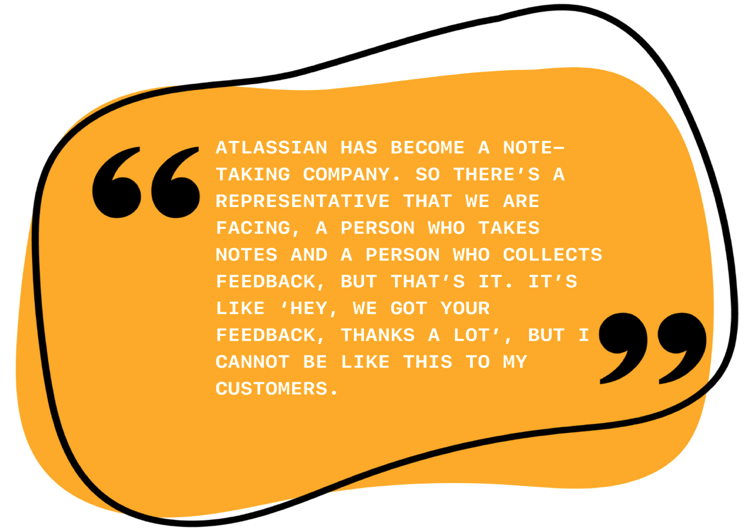

A survey here, an interview there, an early adopter program, a longitudinal study…yet another survey…our requests for research participation exceeded our partners’ appetite for giving feedback. Worse still, in the past, we had failed to show gratitude for our partners’ ongoing participation – while these partners were happy to give feedback, they often never heard back from us after the fact. Their experience with us became a one-way transaction rather than a reciprocal partnership. Some hard feedback from a community member gave us pause.

Shared with permission (quote & name) from one of our Marketplace Partners, Dmitry Astapkovich.

The Feedback Fatigue that our partners experienced is not unique to Atlassian nor niche populations like the Marketplace Partner community. Feedback Fatigue is a risk for any researcher who has multiple interactions with the same community over time. However, the risk is greater now in the context of remote communication. The populations we study are experiencing higher levels of communication fatigue now more than ever before.

The consequences of feedback fatigue may be more acute in smaller populations, but they offer a warning to the unseen challenges in larger communities. In our case, our partner population was a “canary in the coal mine” that had alerted us to a wider concern about sustainable research practices at Atlassian. To maintain our close connection with our partner community, we had to make a change to our process. We’ve redesigned that well-oiled research process – injecting new life into textbook methods so that our community feels valued and engaged long term.

The consequences of feedback fatigue may be more acute in smaller populations, but they offer a warning to the unseen challenges in larger communities. In our case, our partner population was a “canary in the coal mine” that had alerted us to a wider concern about sustainable research practices at Atlassian. To maintain our close connection with our partner community, we had to make a change to our process. We’ve redesigned that well-oiled research process – injecting new life into textbook methods so that our community feels valued and engaged long term.

The feedback we received from our Partners reminded us of an important lesson: achieving the best quality of insight from a small community requires you to design for the experience of participating, and not the research method and outcome alone.

We have to ask ourselves, would we want to participate in our studies?

The benefit of working within a technology organisation is that we have many creative humans and the best tools at our fingertips. So we looked to the tools and teams around us to better our research approaches. Our learnings can hopefully inspire other teams to find better ways to engage with their communities.

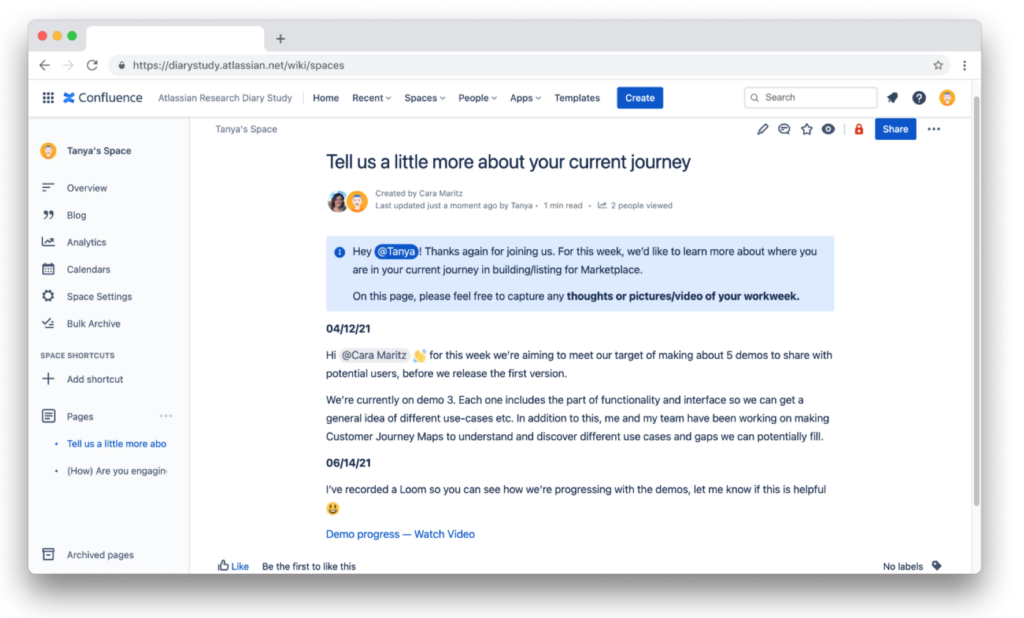

Avoiding Diary-Study Burnout by Using Confluence as a Research Tool

Even among the most seasoned researchers, longitudinal studies have a reputation for being notoriously difficult in terms of keeping it engaging and managing participant retention. The idea of rolling out a diary study to avoid burnout might even sound like an oxymoron. But when we tallied the knowns and unknowns of existing research and insights in this space, it was clear that there were gaps in our understanding of the end-to-end journeys of our partners. We needed a clear path to discover and capture in-context experiences and touchpoints with the Atlassian developer platform rather than solely relying on retrospective data, or methods relying on our participants’ memory of past habits, pain points and behaviours. While our major priority was to get an accurate understanding of these journeys and experiences, we also wanted to build out a sustainable piece of research for our partners, as well as juggle complexities generated by the feedback-fatigued population. We needed to design a study that could mitigate burnout and drive connection.

Confluence, a Well-Known Tool and Easy to Use

We discovered that our participants were quick to orient themselves and contribute content. We eliminated the burden of potentially learning a new tool by inviting our participants to join us in an already familiar platform.

Flexibility to Capture and Share Limitless Content in a Structured Format

Participants made use of the flexible nature of Confluence to upload many types of content, not just text. In the diary entries, we received photos, videos (Looms and Zoom recordings), or text into their weekly diary entries. Our participants could freely express themselves with whatever content best communicated their experience. This flexibility also helped our participants share their stories (and emojis) in ways most familiar to them. As a result, we could get to know them rather than forcing unnecessary boundaries or formalities.

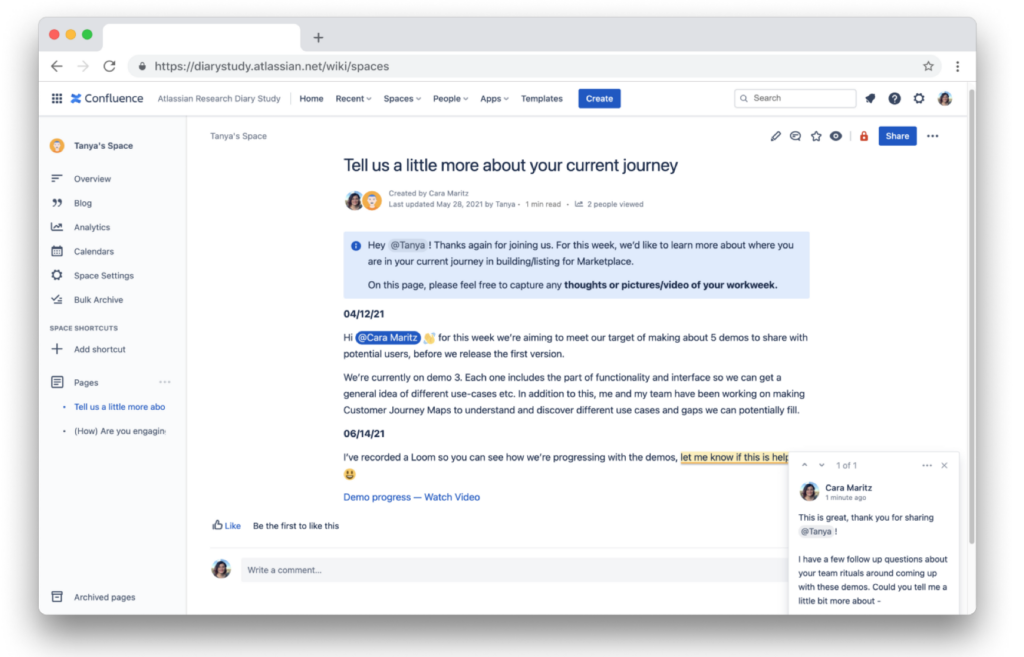

Optimised for Async Collaboration

Our Marketplace Partners are based all over the world, so Confluence’s asynchronous collaboration was perfect. By choosing a tool optimised for async collaboration, we unlocked opportunities to probe, discuss and dig a little deeper into key areas of interest or experiences.

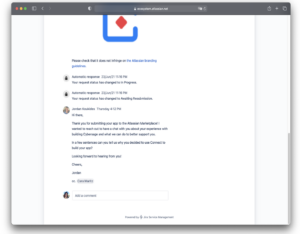

Intercepting not Interrupting with Jira Service Management

We needed to explore more granular aspects of our partners’ experience, the type of detail that would be difficult to recall retrospectively in a survey or an interview. Traditional approaches to intercepting and capturing in-context data (like in product surveys) would interrupt our partner’s workflow and be met with frustration. Instead, we made use of a tool already in use in the interaction between partners and Atlassian – Jira Service Management (JSM). JSM is often employed as a help desk tool. In our context, partners use this tool to submit a request when listing a new application on our Marketplace.

We needed to explore more granular aspects of our partners’ experience, the type of detail that would be difficult to recall retrospectively in a survey or an interview. Traditional approaches to intercepting and capturing in-context data (like in product surveys) would interrupt our partner’s workflow and be met with frustration. Instead, we made use of a tool already in use in the interaction between partners and Atlassian – Jira Service Management (JSM). JSM is often employed as a help desk tool. In our context, partners use this tool to submit a request when listing a new application on our Marketplace.

Our researchers joined in on this existing interaction, adding to the conversation to ask key questions about their workflow and decision-making at the moment.

This new form of intercept was effective with our community because:

- We avoided adding additional tools, channels of communication, or effort to our partner’s experience. By augmenting an existing process, we minimised the disruption to their work.

- We used a personalised approach sent by actual team members (not automated bots) to facilitate sharing and convey the importance of the community to our process. Evidence shows that sharing by a researcher can encourage reciprocal disclosure from participants during the study. Anecdotally, we saw some indicators of this in our approach.

Multi-modality Surveys

Survey research is particularly challenging in our small community as our population size does not support frequent sampling. When we make survey approaches to other broad, large-scale user bases, we can survey on a monthly cadence or ad hoc as questions arise. Frequent surveying is manageable in a world where we might have millions of users to sample. With a small audience in the hundreds or sometimes low thousands of partners and developers, it’s almost impossible to obtain statistically significant volumes of feedback.

We reduced the survey burden and boosted our engagement by revising our survey distribution strategies. Typically, we would email a survey link to our partners, but our partners are bombarded by email communication. Our email would be lost in the noise and add to their existing communication overload – a feeling that most of us can relate to in the remote work environment.

Instead, we use existing community structures and interactions to distribute our surveys. In our case, this means:

Instead, we use existing community structures and interactions to distribute our surveys. In our case, this means:

- Front line team members conduct surveys during their regular interactions with Partners.

- We distribute survey links on community forums, e.g., https://community.atlassian.com/, existing newsletters.

- We conduct our surveys using asynchronous (but personalised) modalities of communication, e.g., community forum threads or via slack channels (try Slack Connect to add external people to your slack workspace).

- Limiting and consolidating survey approaches we make to our Partners, enabling us to engage more meaningfully with the community when inviting them to give feedback

Although these innovations to our study design were spurred by very critical feedback, ultimately, the learnings have been for the betterment of both our insights and the community of Partners. Already, we’ve seen renewed, positive engagement from our community of users already. One particular Partner shared with us the personal value they gain from engaging in our studies:

![I’m making my retrospective with you since I’m a solo developer. [It’s] kind of catching up and making my retrospective. After our previous calls, my motivation was higher to develop app capabilities and add some new capabilities. It’s a motivator for me to talk with you.](https://www.epicpeople.org/wp-content/uploads/2023/06/McCurrie9-1024x726.png)

Shared with permission (quote & name) from our Marketplace Partner, Turgay Çelik.

Skip the Learning Curve – Take It from Us

For those of you who interact with a population many times in your research program, avoid our learning curve and try implementing our key takeaway:

If you’re designing a research program that needs to support long-term, sustained engagement, look for ways to reduce friction to participate. This is especially important if you have a small population of users that can be fatigued more rapidly than larger populations.

- Participant empathy: You can reduce the friction to participate by better understanding the experience of those participating in your research. It’s about engaging in some participant empathy.

- Augment, don’t add: Rather than re-inventing the wheel with your research program and introducing new tools or communication channels, instead, prioritise friction reduction in their existing experience.

- Consider your tradeoffs: You might find that augmenting the existing experience may lead you to steer away from the ‘best’ or your preferred research methodology. We find this to be a worthwhile compromise when you can deliver better quality engagement and, in turn, better data.

If you’re working in an applied or organisational research setting then you will be accustomed to making trade-offs between rigour and practicality. Consider participant experience and feedback fatigue as additional factors to weigh up when you design your study. When designing your study, ask yourself a few key questions to help establish and mitigate the effects of feedback fatigue:

- How does your study fit within the larger context of feedback requests of your sample population?

- How might you reduce the friction to participate in your study?

- How might you ensure that your participant feels that their time has been respected and their feedback was heard?

Big thank you to those within Atlassian’s research team and Atlassian’s Ecosystem team who have supported and engaged in this work. Particularly: Cara Maritz, champion of creativity in this research program, an unstoppable advocate of partner experience, and the driver of many of the methods described here; Jake Moody, our survey and quantitative mastermind, always orienting the team to sampling considerations and stamping out poor survey design; Sharon Dowsett, research leader, addressing the hidden work required to allow programs, such as this, to succeed. Empowering researchers to do their best work.

Atlassian is an EPIC2021 Sponsor. Sponsor support enables our unique annual conference program that is curated by independent committees and invites diverse, critical perspectives.

Related

Software Quality and Its Entanglements in Practice, Julia Prior & John Leaney

Using Employee Opinion Surveys Ethnographically, Meritxell Ramírez-i-Ollé

How Ethnographic Methods Make APIs More Usable, Libby Kaufer & Maria Vidart-Delgado

0 Comments