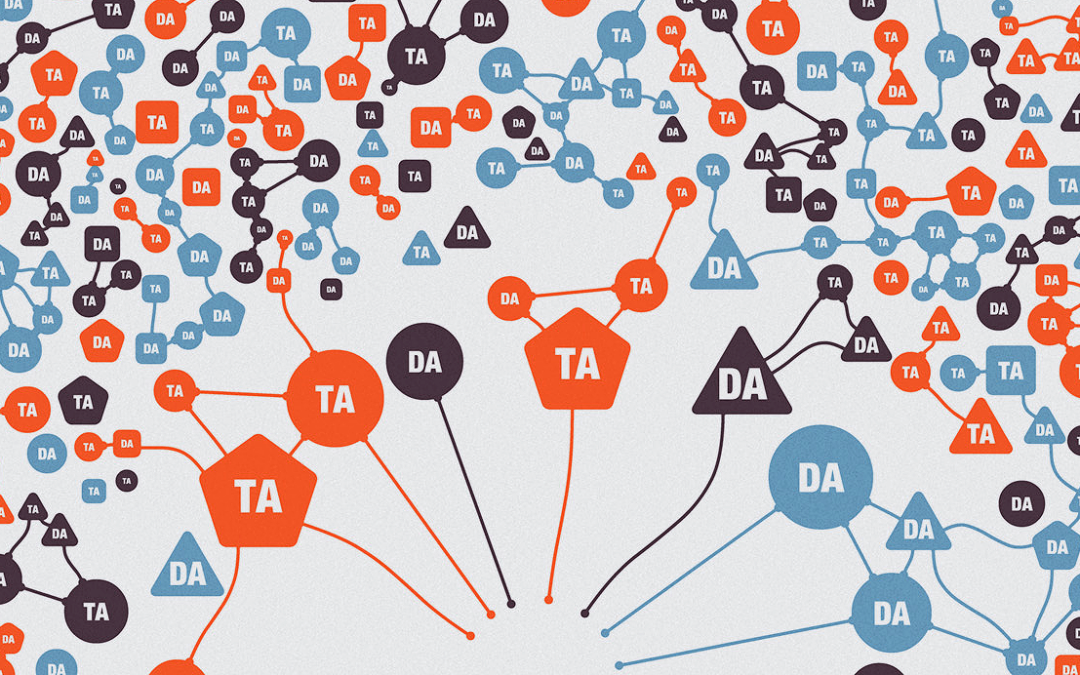

This paper details ethnographic methods, experiences, and insights from an ethnographer and an industry engaged complex systems engineer in how to study resilience in blockchain-based DAOs as a novel field site....

This paper details ethnographic methods, experiences, and insights from an ethnographer and an industry engaged complex systems engineer in how to study resilience in blockchain-based DAOs as a novel field site....

Learn models and principles to ensure organizations are creating, using, and deploying AI that coworkers, customers, and society can trust. Overview Our lives are directed, enriched, influenced, and sometimes...

Artificial intelligence (AI) has made huge strides recently in areas like natural language processing and computer-generated images – every other week seems to bring another breathtaking headline. Engineers, developers, and policymakers in the AI community are more seriously grappling with the...

Overview Technology companies have...

It is easy to become pessimistic, if not dystopic, about tracking technologies. The current digital services landscape promotes scoring, selecting and sorting of people for the purposes of maximizing profit. Machine logics rely on profiling characteristics and predicting actions, and management by...

Description Approx 1 hr 43 min. This video presents the lecture portion of a half-day tutorial. Case studies and a...