This paper provides a theoretical alternative to the prevailing perception of machine learning as synonymous with...

This paper provides a theoretical alternative to the prevailing perception of machine learning as synonymous with...

PechaKucha Presentation—This paper raises the implications of simplifying algorithms for scale and uplifting content that is damaging for human evolution. Technology is powerful because of its scale and also disempowering for the same reason. Scale is in the variables and online media, in the zest...

This 2019 project conducted in the US and the UK sought to understand which conspiracy theories are harmful and which are benign, with an eye towards finding ways to combat disinformation and extremism. This case study demonstrates how ethnographic methods led to insights on what “triggered”...

It is easy to become pessimistic, if not dystopic, about tracking technologies. The current digital services landscape promotes scoring, selecting and sorting of people for the purposes of maximizing profit. Machine logics rely on profiling characteristics and predicting actions, and management by...

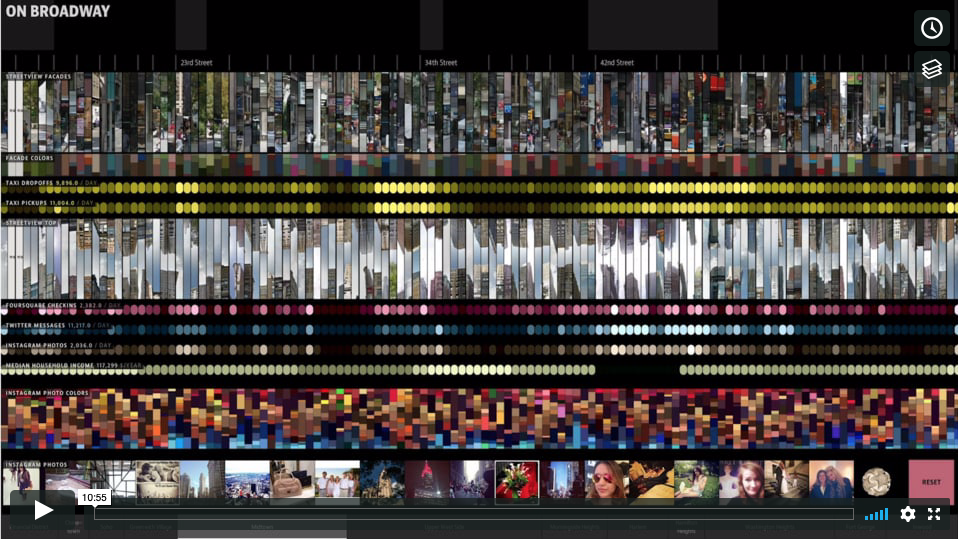

Description Approx 1 hr 43 min. This video presents the lecture portion of a half-day tutorial. Case studies and a...

Virginia Eubanks is an Associate Professor of Political Science at the University at Albany, SUNY. Her most recent book is Automating Inequality: How High-Tech Tools Profile, Police, and Punish the Poor, which dana boyd calls “the first [book] that I’ve read that really pulls you into the world of...

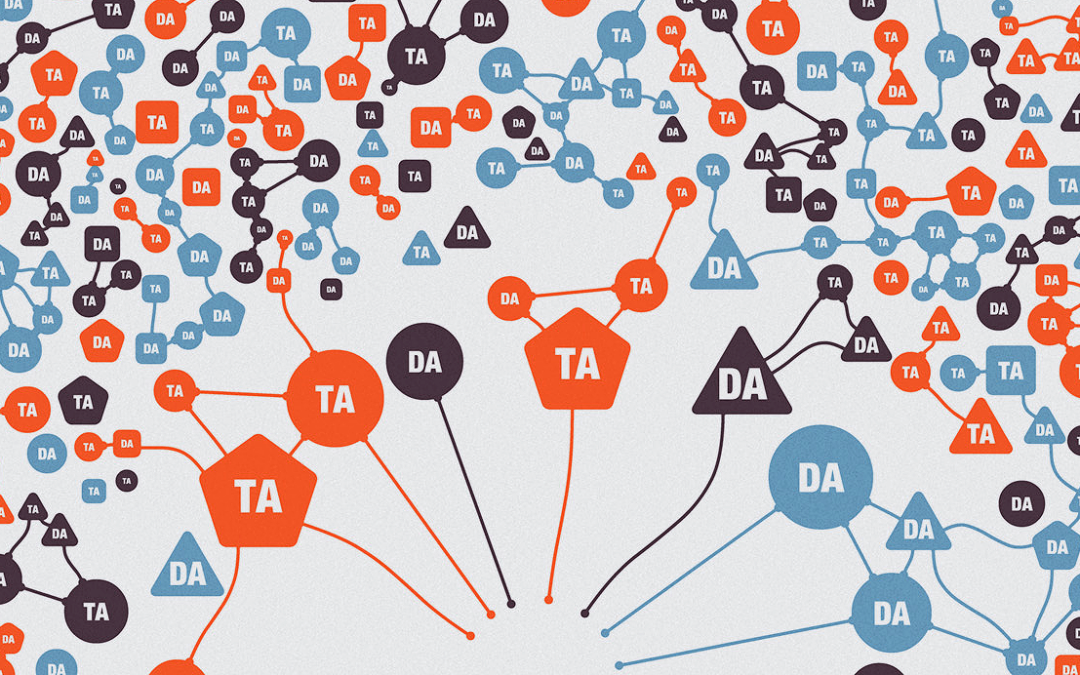

As algorithms play an increasingly important role in the lives of people and corporations, finding more effective, ethical, and empathetic ways of developing them has become an industry imperative. Ethnography, and the contextual understanding derived from it, has the potential to fundamentally...

The focus of this paper is to investigate deep learning algorithm development in an early stage start-up in which edges of knowledge formation and organizational formation were unsettled and contested. We use a debate by anthropologists Clifford Geertz and Claude Levi-Strauss to examine these...

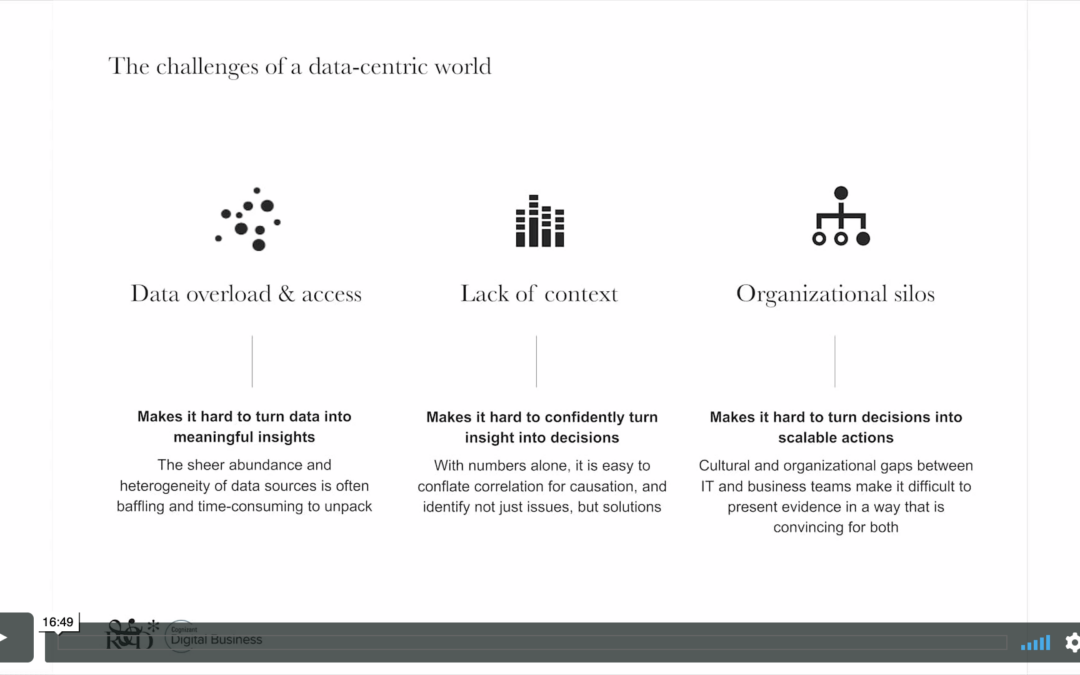

Case Study—We report on a two-year project focused on the design and development of data analytics to support the cloud services division of a global IT company. While the business press proclaims the potential for enterprise analytics to transform organizations and make them ‘smarter’ and more...

The successes of technology companies that rely on data to drive their business hints at the potential of data science and machine learning (DS/ML) to reshape the corporate world. However, despite the headway made by a few notable titans (e.g., Google, Amazon, Apple) and upstarts, the advances...