Automation

Automations shift work, decision making, and pattern finding between people and mechanized systems, with profound implications for human relations and everyday life.Overview

Automation has a long history and defining role in the evolution of social, political and economic structures. At its core, automation is an effort-shifting endeavor, moving activity from humans to mechanical / physical or software systems. Tasks, pattern finding, and decisions are amongst the many things that have and are being automated.

Automation has garnered repeated attention both in terms of what it takes to create it (how it is produced), and perhaps more critically, of the impact it has, both in local settings and more broadly. Do automated systems and work flows create efficiencies? Do they save labor, or just shift it elsewhere? Are the labor-saving ramifications good for business? What about for society? What’s left out when things are automated, and what new possibilities does it give rise to?

For social and humanistic observers, issues of how automated systems encode and/or subvert relational dynamics of society abound. Concerns for how people are judged and treated, and the subtle ways in which automated systems enable and perpetuate these treatments, show up in attention to questions of fairness, taste, equity, and care, for instance. By shifting effort, automation changes human interactions and relations.

Ethnographic work, as demonstrated by the authors in this collection, deftly exposes where and how these shifts emerge and how they are responded to. Ethnographers are deeply attuned to the contextual realities of the people and practices they explore, realities that are dynamic and, as we see in these works, have no neutral standing. Rather, central to any system of automation are questions of how value is constituted and subverted, what counts, for whom, and when. These works reveal how unstable and evolving the answers to these questions are.

Key Articles

Tutorial: Ethics in Data-Driven Industries, Emanuel Moss and Friederike Shüür (2019). In their tutorial, Moss and Schuur provide a laser sharp focus on the ways data work is automated through machine learning and artificial intelligence (ML/AI), to show how the automation of categorization and thus meaning and action are produced. Revealing the categorical messiness of this data work, they highlight the challenges in accomplishing an ethical and fair state. ML/AI systems are unavoidably gated by measures of performance which demand tradeoffs. Do you want more accuracy (precision), and thus potentially the non-inclusion of some data, or more inclusivity (recall), but with less accuracy, for instance? The answers to a question such as this are not only highly contextual in nature, but also dynamic, as the information and action needs and expectations change as the system is deployed and redeployed across different settings.

Automating Inequality, Virginia Eubanks (2018). In her penetrating keynote, Virginia Eubanks exposes the “digital poorhouse” made through the proliferation of data categorized in particular ways as part of predictive enforcement of social policies. A point echoed in Moss and Schuur’s tutorial, she shows how initial intentions of data inclusion and processing come to have different impacts over time and place. She suggests that people throughout the Allegheny County’s Children, Youth and Family system (as one of several systems she examines) are well intentioned and try to do the right things, but are bound by data system automation which flags cases based on predefined categories. These categories carry meanings ill fit for evolving situations, generating systemic forces that perpetuate negative effects over time. It is impacts, not intentions, that ultimately matter. Exposing the deep social inequalities proliferated through automated systems, she poses the question, “what if the problem with the coming age of AI and machine learning in social services is not broken systems, but rather systems that carry out the deep social programming, the dictate of the digital poorhouse to profile police and punish poor people, to limit access to resources and police the behavior of the poor, what if they are doing that job too well, not too poorly?”

Calibrating Agency: Human-autonomy Teaming and the Future of Work amid Highly Automated Systems, Lee Cesafsky, Erik Stayton, Melissa Cefkin (2019). Cesafsky, Stayton and Cefkin attend to the interactions of users of advanced work systems in terms of the possible interactional modalities designed into systems. Their case is of remote operators who monitor and guide autonomous vehicles from a central control room through on-going interaction and coordinative action with the AI-driven system. Sharing with Eubanks an interest in the authority or agency of users of the system to intercede or take an action, they propose a set of four paradigms that positions the human operator as a particular kind of laborer, and thus has ramifications for the relational terms of work at play:

-

- As a “technical bandaid”, to make up for technological lacks

- As a “social knower” for bringing judgment and interpretation uniquely (thus far, at least) readable through a human lens

- As a “machine trainer”, to produce the data needed by the system to improve

- As a “legal actor”, there as the locus of responsibility (echoing Elish’s 2019 EPIC talk Moral Crumple Zones: Agency and Accountability in Human-AI Interaction)

Beyond User Needs: Meaning-Oriented Approach to Recommender Systems, Iveta Hajdakova, Deb McDonald, Sohit Karol (2020). In their case study, Hajdakova et al. offer a reframing of the underlying rationale upon which Spotify’s music recommender system was being conceived and developed. Common to applications and web-based systems more broadly, a core assumption is that people are there to do something, they have a job to do, hence the ‘jobs to be done’ (JTBD) paradigm of research for interaction design. Through their research, the authors reframe the relational modality with which users interact with the system, shifting from the utilitarian paradigm of JTBD to a meaning-based paradigm, drawing on the work of Lucien Karpik (2010). Not only does their case study re-assert people’s relationship to interactions with the AI-based system, but further exposes how music listening itself is not only a matter of ‘taste’, but part of the relational experiences of people in their lives.

Autonomous Individuals in Autonomous Vehicles: The Multiples Autonomies of Self-Driving Cars, Erik Stayton, Melissa Cefkin, Jingyi Zhang (2017). Stayton, Cefkin and Zhang also address questions of relationality raised through interactions with automated systems by asking fundamental questions of how autonomy challenges and potentially reconfigures notions of the self. They point out, in turn, what they call “the strange polysemy at the heart of autonomy: one may be freed from certain tasks but also further embedded in sociotechnical systems that are beyond individual control.” Who or what, in the end, is made autonomous?

Go Deeper

Designed for Care: Systems of Care and Accountability in the Work of Mobility, Erik St. Grey (ne Stayton) and Melissa Cefkin (2018). The authors look at the proceduralization of transit work in a small city bus system to ask what happens to the care of people and things in an automated system. They show that care work exceeds the automated system features, and offer up 5 key questions in designing for care.

Boundary Crossings: Collaborative Robots and Human Workers, Bruce Pietrykowski and Michael Foster (2019). Three case studies of the use of Cobots in auto production contexts are examined, revealing three different effects on human agency. In one case agency is displaced, in another it is enhanced, and in the third it is expanded.

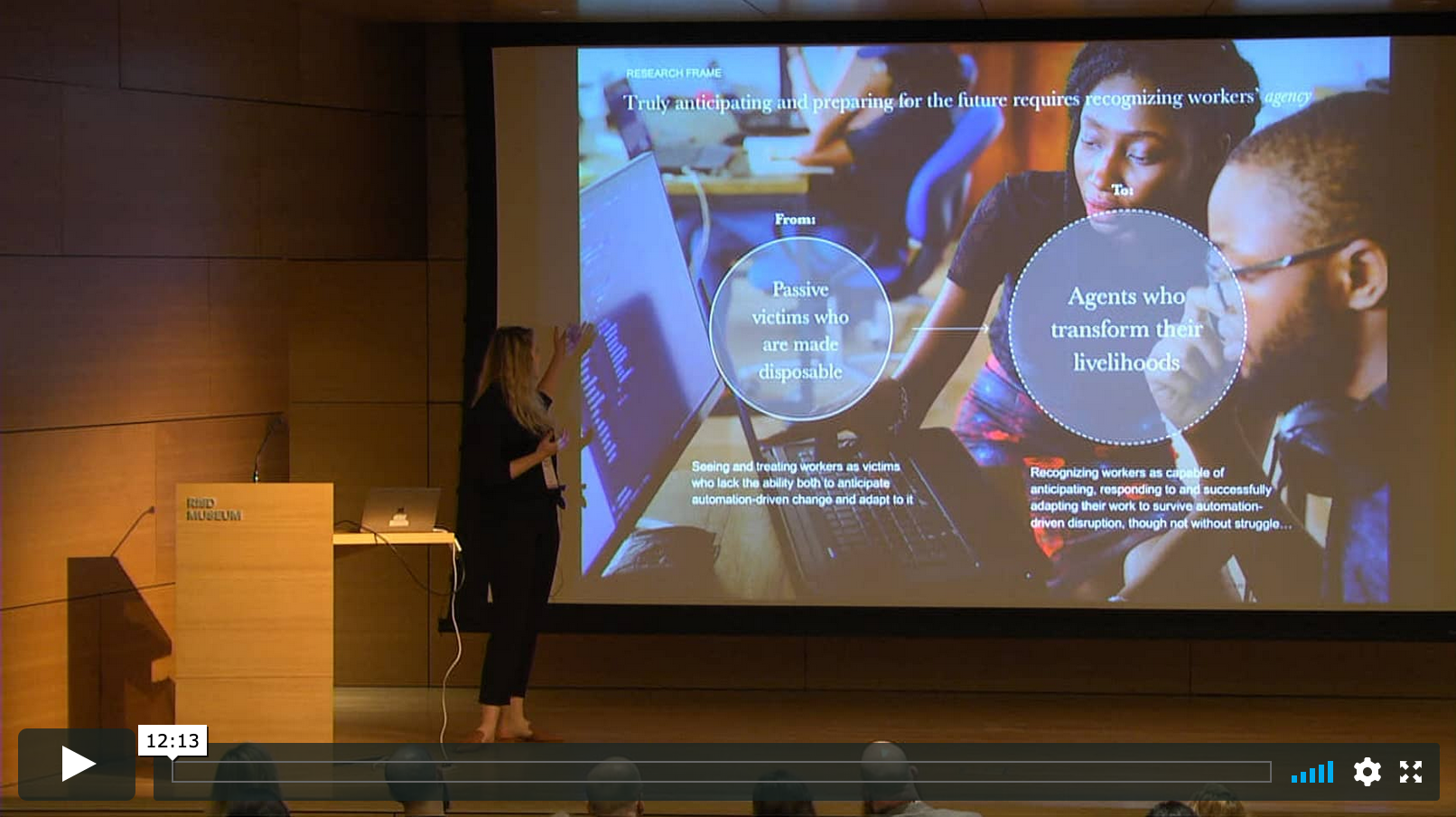

The Adaptation of Everyday Work in the Age of Automation, Tamara Moellenberg, Morgan Ramsey-Elliot, Claire Straty (2019). Echoing a long tradition of identifying worker ingenuity and resistance in the form of work-arounds, this paper offers an upbeat assessment of how white collar workers are deploying adaptive tactics in the face of varying forms of automation to argue that rather than being replaced, workers are adapting to new forms of work.

Agency and AI in Consulting, Cengiz Cemaloglu, Jasmine Chia, Joshua Tam (2019). Based on field studies of professional consultants in three different contexts, the authors provide a framework that posits an analytical distinction, drawing from Michel, between “thinking agency” and “executional” agency. They use this interplay as a basis for recommending automation approaches to enhance human agency at work.

Curator: Melissa Cefkin

Contributor: Elizabeth Anderson-Kempe